One-sentence summary: GPT did not appear from nowhere. It is the result of a decade of deep learning, scale, and Transformer architecture.

1.1 A Gentle Starting Point

This chapter is intentionally light. No math, no code. We are going to answer one question:

Where did GPT come from?

When many people first used ChatGPT, it felt like the technology had fallen from the sky. But the architecture behind it, the Transformer, had been building momentum for years before the product moment arrived.

The history matters because it helps you:

- Build intuition: why did Transformer replace RNN and LSTM in language modeling?

- Understand the players: why do OpenAI, Google, Meta, and other labs matter in different ways?

- Read the trend line: how did chat models lead toward agents, deep research, and world models?

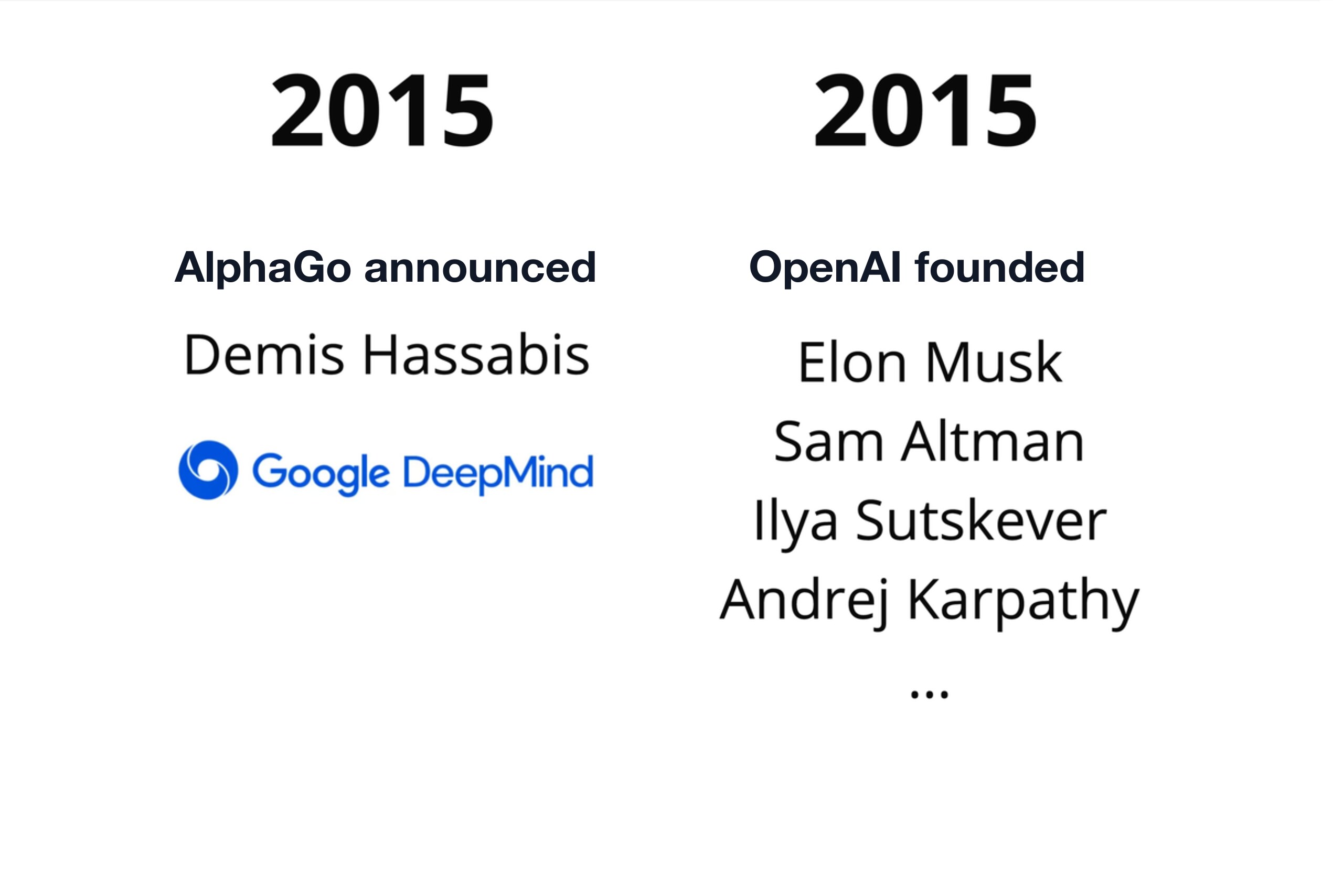

1.2 2015: Two Seeds

Two events in 2015 shaped much of the AI landscape that followed.

1.2.1 AlphaGo

The first event was AlphaGo.

DeepMind, led by Demis Hassabis and owned by Google, built a system that defeated top human Go players. Go was considered much harder than chess because the search space is enormous — the state-space complexity of Go is roughly 10^170, more positions than there are atoms in the observable universe. People widely expected AI to need another decade to crack it. AlphaGo proved that deep learning and reinforcement learning could solve problems many people thought were still far away.

The important lesson was simple:

Deep learning plus scale can produce surprising jumps in capability.

1.2.2 OpenAI

The second event was the founding of OpenAI.

The original idea was that AI was too important to be controlled by only one company. The founding group included people such as Elon Musk, Sam Altman, Ilya Sutskever, Greg Brockman, Wojciech Zaremba, and Andrej Karpathy.

OpenAI began as a non-profit research lab with an ambitious mission: build powerful AI while keeping the benefits broadly shared.

That mission would later collide with a practical problem: frontier AI needs huge amounts of compute, data, and talent.

1.3 Key People

Before continuing the timeline, it helps to know a few names. The field is large, but the early LLM story is still shaped by a surprisingly small network of researchers, teachers, founders, and engineers.

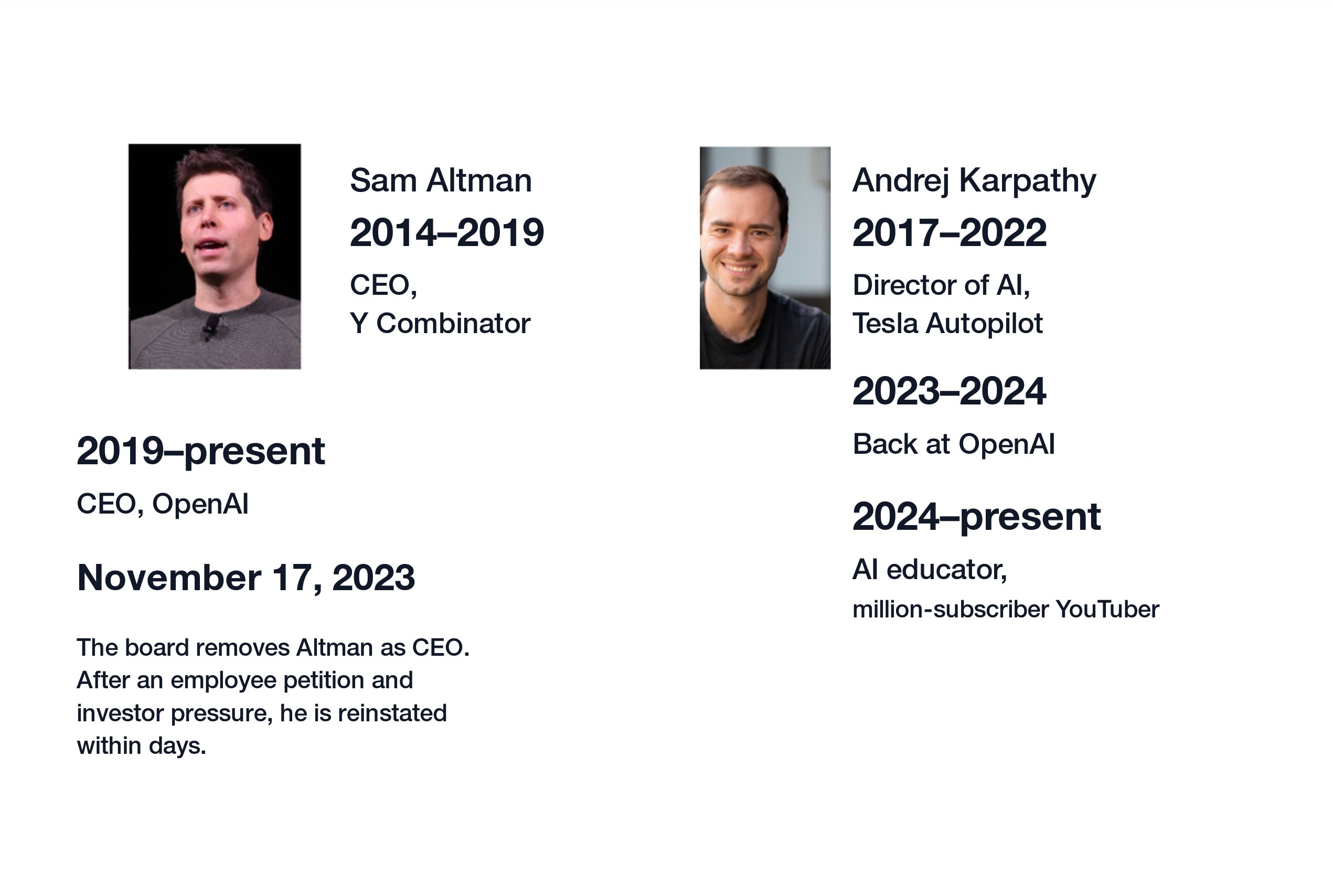

1.3.1 Sam Altman

Sam Altman led Y Combinator from 2014 to 2019. He is not known primarily as a deep learning researcher. His strength is organizational: recruiting people, allocating capital, and turning research direction into product direction.

That mattered because training large models quickly became an infrastructure and business problem, not only a paper-writing problem.

1.3.2 Ilya Sutskever

Ilya Sutskever is one of the core deep learning researchers behind OpenAI. He studied with Geoffrey Hinton and helped bring serious neural network research into the foundation of GPT-style models.

One of the decisive choices was to apply Transformer architecture to language modeling at scale.

1.3.3 Andrej Karpathy

Andrej Karpathy is unusually important as both a builder and a teacher. From 2017 to 2022, he served as Tesla's Director of AI and worked on the vision systems behind self-driving. Before that, his Stanford CS231n course helped make modern computer vision and deep learning feel teachable instead of mystical.

His lectures, code, and the famous "large language models are two files" framing, which we will discuss in Chapter 2, are some of the clearest ways to demystify LLMs.

1.3.4 The Toronto Connection

The University of Toronto was one of the centers of the deep learning revival.

- Geoffrey Hinton helped establish modern deep learning.

- Ilya Sutskever studied under Hinton.

- Wayland Zhang, the author, studied computer science at the University of Toronto in the same era before returning to build companies.

- Andrej Karpathy studied at the University of Toronto as an undergraduate from 2005 to 2009, then continued at Stanford.

This is not a story about one school owning the field. It is a reminder that ideas travel through people. A small research lineage can influence Google, OpenAI, Tesla, Meta, and the broader open-source community.

1.4 2017: Transformer Appears

2017 is the most important year in this book.

1.4.1 The Paper That Changed the Map

In 2017, a Google research team published "Attention Is All You Need."

The paper introduced the Transformer architecture. Its central claim was bold:

You do not need recurrence. You do not need convolution. Attention can carry the sequence modeling burden.

At the time, RNNs and LSTMs were standard tools for natural language processing. The Transformer looked strange because it processed tokens in parallel and used Attention to connect positions to each other.

The advantages turned out to be exactly what large-scale training needed:

- Parallel computation: the model can process a whole sequence more efficiently.

- Long-range relationships: Attention can directly connect tokens far apart in the sequence.

- Scalability: the architecture maps well to GPU and TPU matrix operations.

1.4.2 GPT Means Transformer For Generation

In 2018, OpenAI showed that Transformer architecture could be used for language generation through GPT-1.

GPT stands for:

- Generative: it can generate new text.

- Pre-trained: it first learns from large-scale text.

- Transformer: the architecture doing the work.

This is the moment where a machine translation architecture began turning into a general language model architecture.

1.5 2018: OpenAI Changes Shape

Around the same period, OpenAI itself changed.

1.5.1 From Research Ideal to Compute Reality

Large models are expensive. They need GPUs, data infrastructure, distributed training, and a team that can debug failures across hardware and software.

That made a pure non-profit structure difficult. OpenAI moved toward a capped-profit model so it could raise the money required to compete at the frontier.

This move remains controversial, but the underlying pressure is easy to understand: scaling language models is capital-intensive.

1.5.2 Elon Musk Leaves the Board

In 2018, Elon Musk left OpenAI's board. The public explanation was potential conflict with Tesla's own AI work. The broader lesson is more durable than the gossip: once AI became strategic, questions of mission, control, capital, and competition could no longer be kept separate.

1.5.3 The Governance Tension

AI labs do not only make models. They make decisions about release, safety, commercialization, and control. The tension between mission and capital did not disappear. It became part of the story.

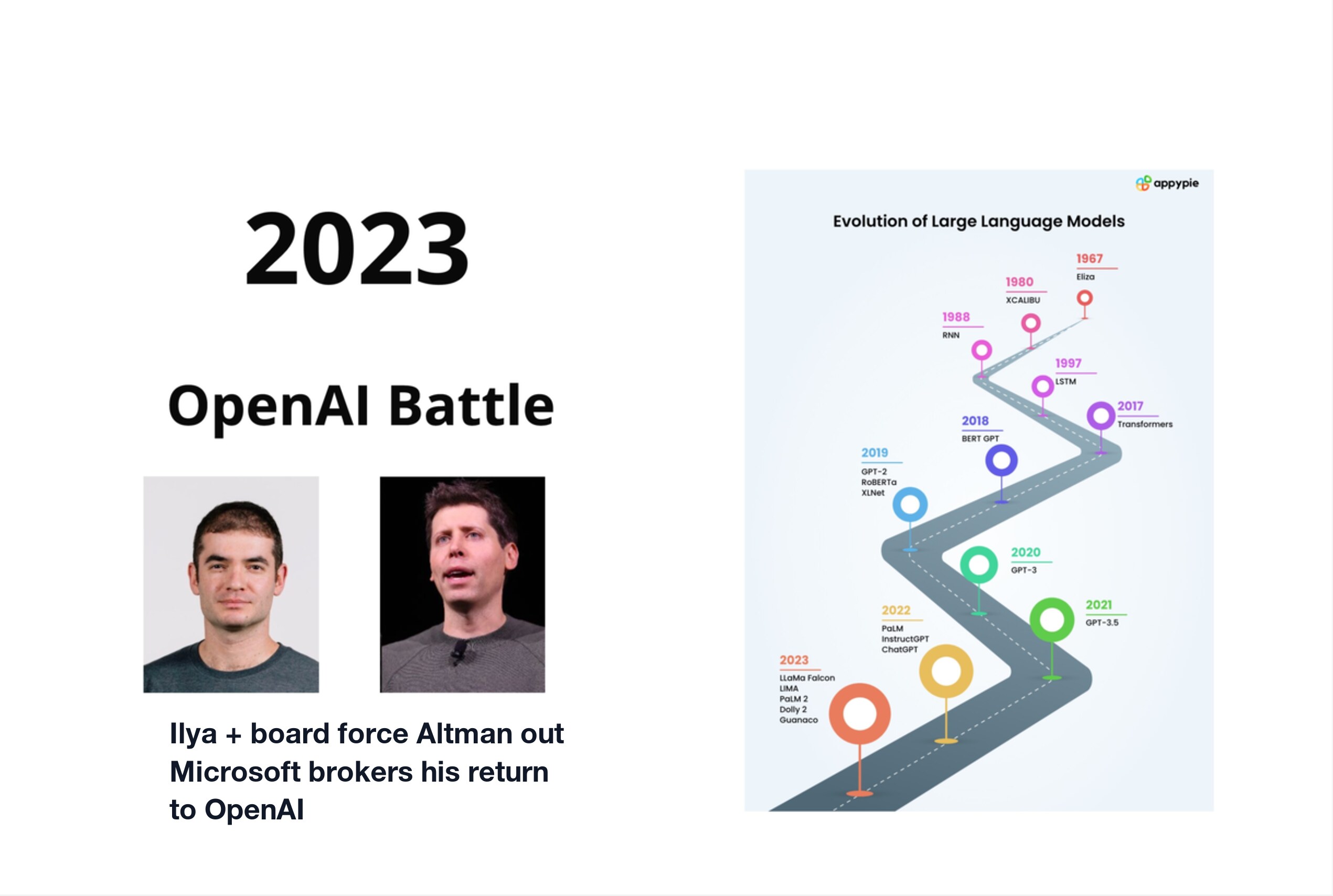

1.6 2019-2023: The Large Model Race

From 2019 to 2023, language models moved from research demos into products used by millions of people.

1.6.1 Three Broad Camps

By 2023, the field had several recognizable camps:

| Organization | Representative work | Character |

|---|---|---|

| OpenAI | GPT-2, GPT-3, ChatGPT, GPT-4 | closed product, strongest consumer breakthrough |

| BERT, Transformer research, Gemini | deep research base, slower product conversion | |

| Meta | PyTorch, LLaMA | open models and tooling ecosystem |

This table is simplified, but useful. Different organizations pushed the same architecture through different paths: closed products, research platforms, open weights, and tooling.

1.6.2 GPT's Progression

The GPT line evolved roughly like this:

- GPT-1, 2018: showed that Transformer can work as a language model.

- GPT-2, 2019: scaled up and surprised people with generation quality.

- GPT-3, 2020: 175B parameters and strong few-shot behavior.

- InstructGPT, 2022: used human feedback to make outputs more useful.

- ChatGPT, November 2022: made LLMs feel accessible to the public.

- GPT-4, 2023: pushed reasoning and multimodal understanding further.

The product turning point was ChatGPT. It gave non-specialists a direct interface to language models and made the technology legible.

1.7 2023: Governance Enters the Main Plot

In November 2023, OpenAI had a public governance crisis.

The five-day drama was unusually public: Sam Altman was removed as CEO, employees pushed back, Microsoft became the emergency landing pad, Altman returned, and the board changed shape.

The details are less important for this book than the lesson:

Frontier AI is not only a technical problem. It is also an institutional problem.

Models require organizations. Organizations have incentives, boards, investors, employees, safety concerns, product pressure, and public responsibilities.

When a model becomes important enough, architecture and governance are no longer separate topics.

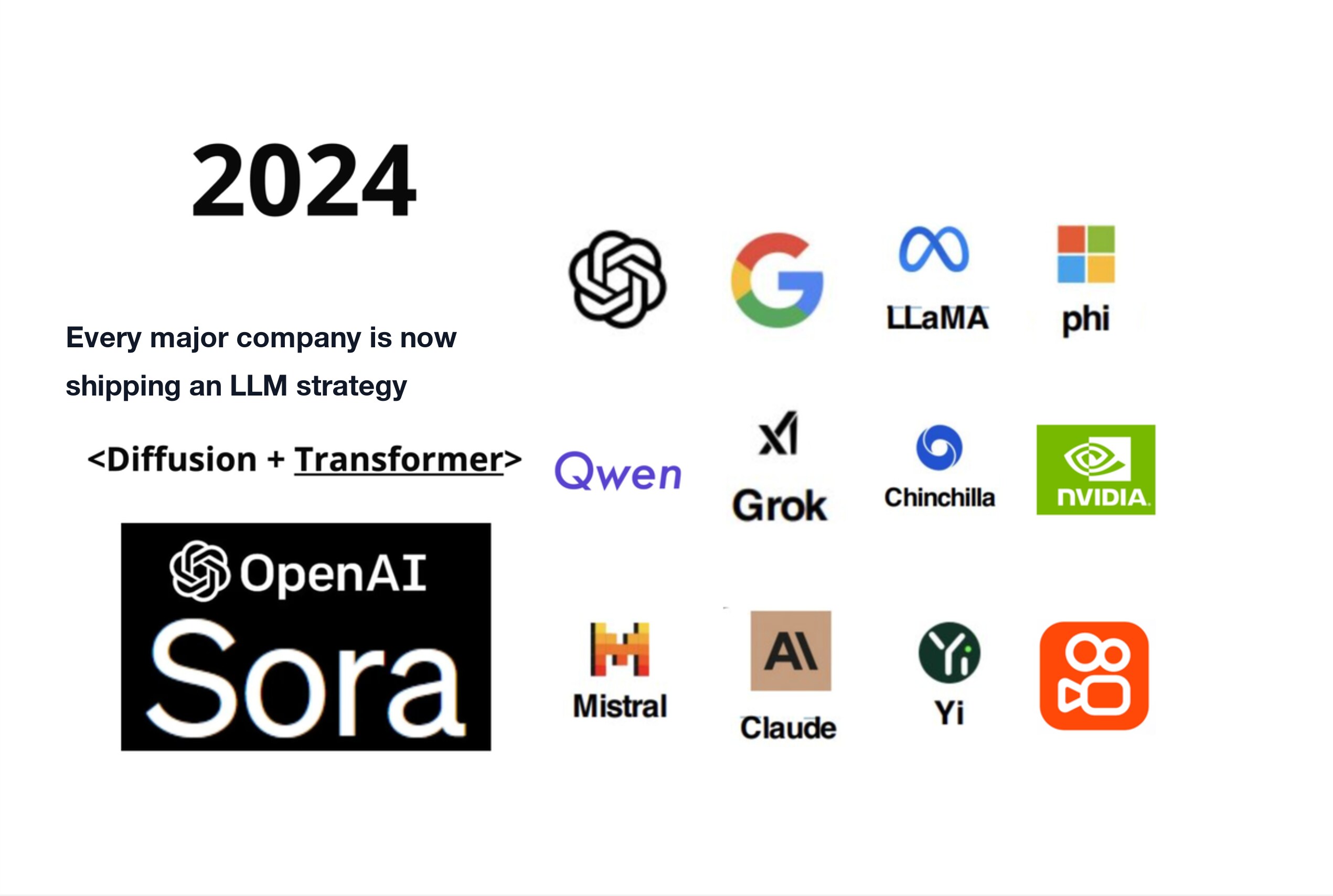

1.8 2024: A Broader AI Landscape

After the 2023 product shock, the field broadened quickly.

Important trends included:

- OpenAI pushing GPT-4 class models and video generation.

- Google advancing Gemini.

- Meta making LLaMA-style open models central to the ecosystem.

- Anthropic competing with Claude.

- Mistral becoming an important European model lab.

- Alibaba Qwen and other Chinese model families growing quickly.

- Microsoft phi series (small models) advancing the case for efficient local deployments.

- Small models becoming useful for local and specialized deployments.

The architecture started as a text model pattern, but it moved into multimodal systems, tool use, agents, code, and video.

One 2024 pattern is worth naming directly: Diffusion + Transformer. Sora made that direction visible. The exact product details matter less here than the architecture lesson: Transformer-style sequence modeling was no longer only about words; it was becoming part of how models organize video, images, actions, and time.

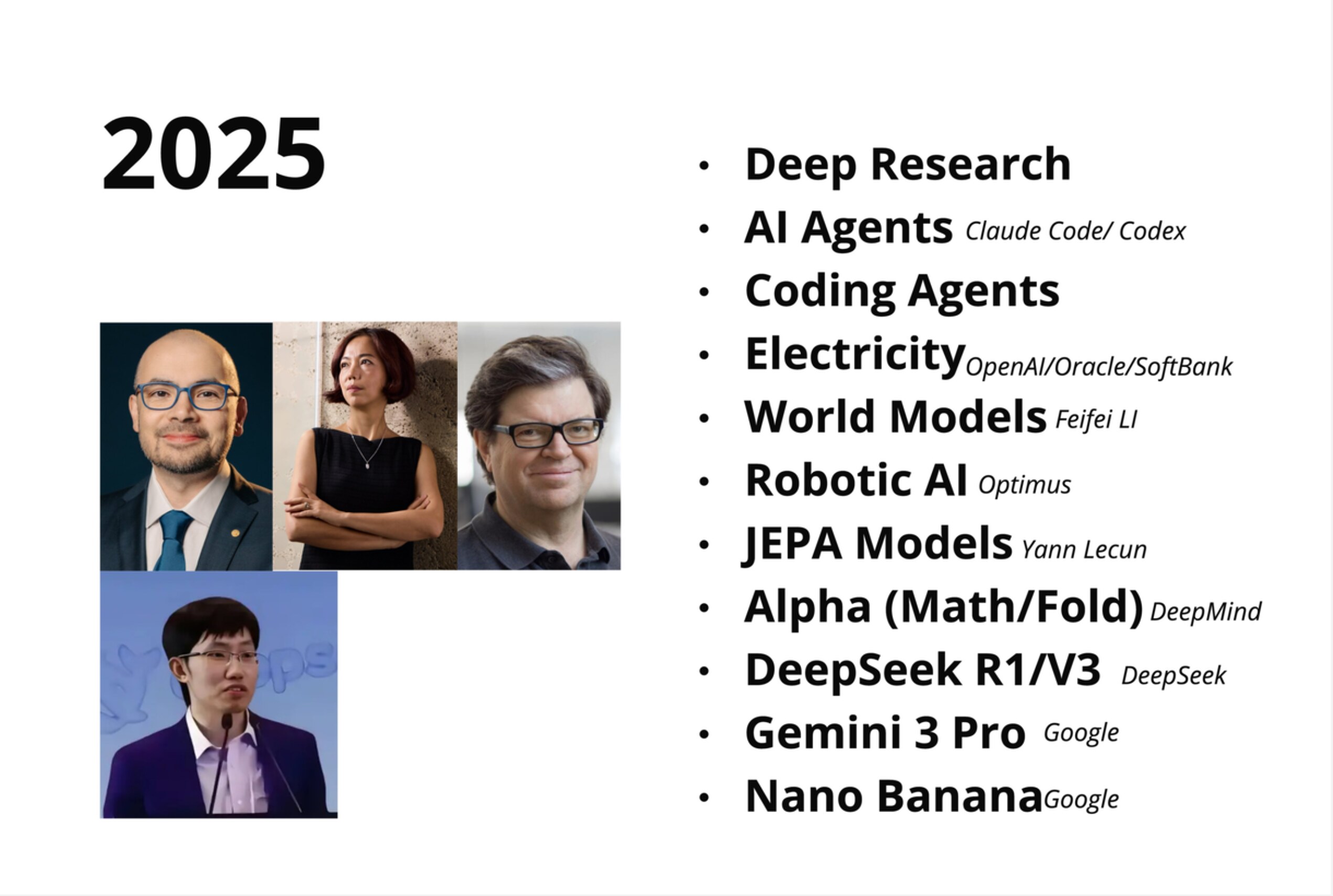

1.9 2025: From Chat to Action

By 2025, the direction was clear: models were moving from answering questions to taking action.

1.9.1 Agents

Agents use tools, inspect files, run commands, modify code, and keep working across steps. The important shift is autonomy: the model is not only producing text, it is operating inside a workflow.

1.9.2 Deep Research

Research systems search, read, compare, synthesize, and cite information across many sources. This is a natural extension of long-context language modeling plus tool use.

1.9.3 World Models

World models aim to understand how the physical world changes over time. This moves beyond language into state, causality, and embodied reasoning.

Fei-Fei Li's spatial-intelligence work points in this direction: models that understand scenes, objects, and physical relationships rather than only text patterns.

1.9.4 Robotics

Robotics combines vision, planning, control, and physical constraints. It is one of the hardest tests of whether AI can connect prediction to action.

Yann LeCun's JEPA line of work makes a related argument from a different angle: prediction should happen in useful representation space, not only as next-token text.

1.10 Chapter Summary

1.10.1 Key Timeline

| Year | Event | Why it matters |

|---|---|---|

| 2015 | AlphaGo and OpenAI | Deep learning becomes strategic |

| 2017 | "Attention Is All You Need" | Transformer appears |

| 2018 | GPT-1 | Transformer becomes a generation model |

| 2020 | GPT-3 | Scale shows surprising capability |

| 2022 | ChatGPT | LLMs enter public life |

| 2023 | GPT-4 and governance crisis | Capability and institutions both matter |

| 2024 | Multimodal and open models | Ecosystem broadens |

| 2025 | Agents and research systems | Models move from chat to action |

1.10.2 Two Core Ideas

-

Transformer is the foundation. GPT, BERT, LLaMA, Gemini, Claude, and many other systems are built on Transformer-like ideas. Understanding Transformer gives you the grammar of modern AI.

-

Scale changes behavior. The jump from small language models to large ones is not only more of the same. GPT-1 had roughly 100 million parameters; GPT-4 is estimated at over a trillion. That is a factor of ten thousand. Larger models can show qualitatively different behavior — emergent capabilities that simply do not appear at smaller scale. Brute force worked. That is uncomfortable, but it is true.

Chapter Checklist

After this chapter, you should be able to:

- Explain where GPT came from in about three minutes.

- Describe why Transformer mattered compared with earlier sequence models.

- Name the different roles played by OpenAI, Google, Meta, and open-source ecosystems.

- Explain why AI labs are both technical and institutional systems.

See You in the Next Chapter

That is enough history for now. If you can tell the GPT story from 2015 to the agent era without checking the timeline, you have the right map in your head.

Next we will demystify the model itself.

Andrej Karpathy has a famous framing: a large language model is just two files. That sounds too simple, but it is a powerful way to understand what an LLM really is.